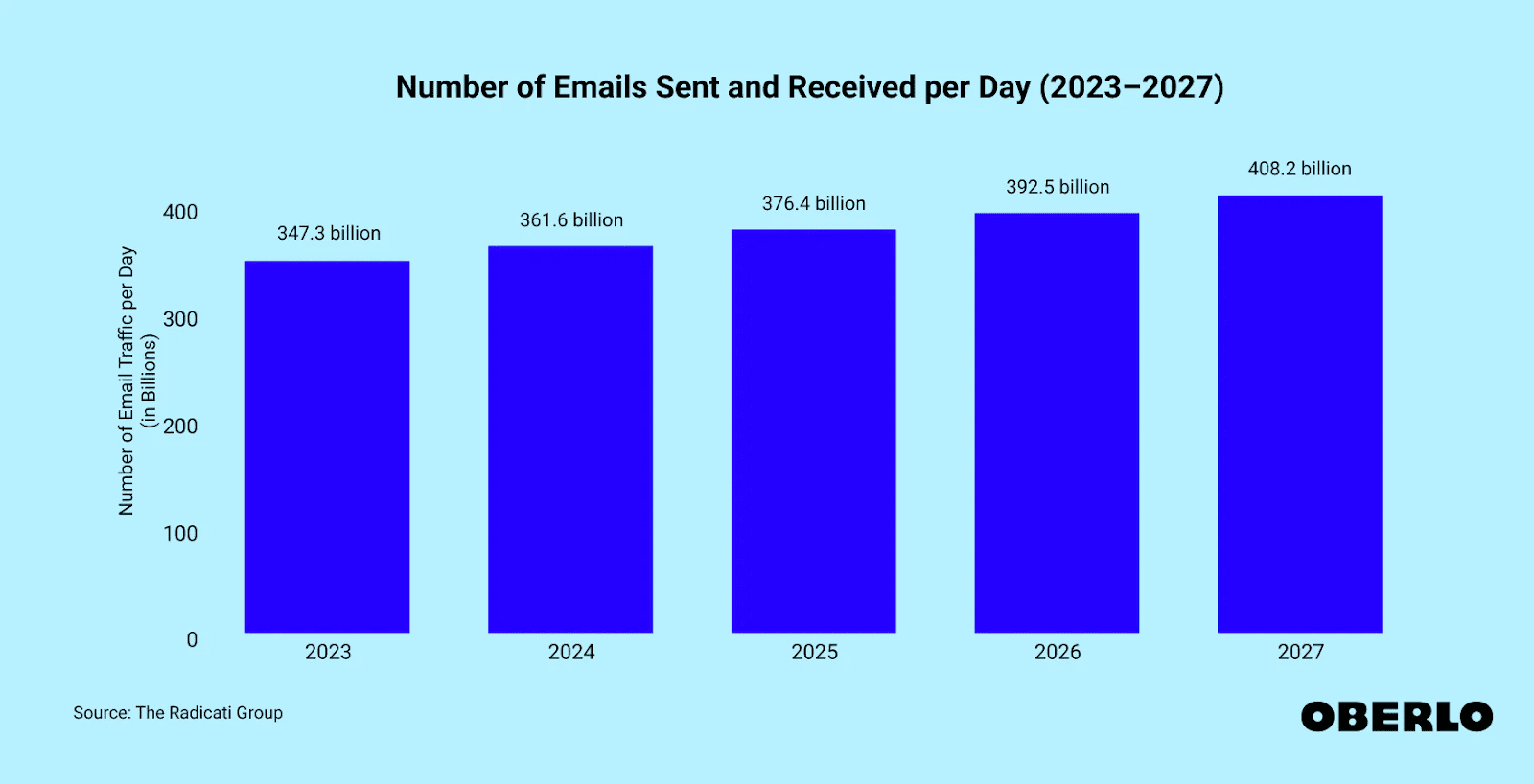

376 billion emails happen every day.

To stand out in that crowd, you need good timing, 8 key elements, and—most importantly—email A/B testing (for each of those 8 key elements).

Is the best way to get your message (and product) in front of ideal customers SEO? Content marketing? CRO? Paid ads?

Yes, yes, yes, and yes.

Those channels are opportunities to reach and connect with prospects.

But 94% of marketing leaders say that email marketing is one of the top three most effective marketing channels.

This is only true if people open your email and click through to your website or landing page. The best way to do that is to run experiments and A/B test your way to success.

TL;DR

Subscribe to our weekly newsletter for tips so good that we might put ourselves out of business.

What is email A/B testing?

Email A/B testing, or split testing, is an email marketing strategy marketers use to experiment with different versions of emails to determine which performs best. You test two versions of your email, with slight variances to them, to determine which is the winning email that gets you better results.

For example, you might split your newsletter subscriber list in half, and email each group a unique subject line.

The goal? To see which email subject line gets the most opens.

Once you learn what makes your audience click, you can better optimize campaign performance for even more wins.

Why is email A/B testing important?

When 376.4 billion emails are sent and received each day, knowing what cuts through the clutter for your intended audience gives you the insight you need to send emails that people

- Open

- Want to read

- Engage with (do the thing you want them to do)

Running email marketing campaigns without split testing leaves money on the table. Without it, there's no way to know what drives outcomes from your audience across your

- Subject line

- Offer

- Design

- Email copy

Overall, email A/B testing helps you achieve

- Higher email open rates

- Higher click-through rates

- More traffic to your website

- Increased conversions

- Decreased unsubscribe rates

- Lower (or zero) spam complaints

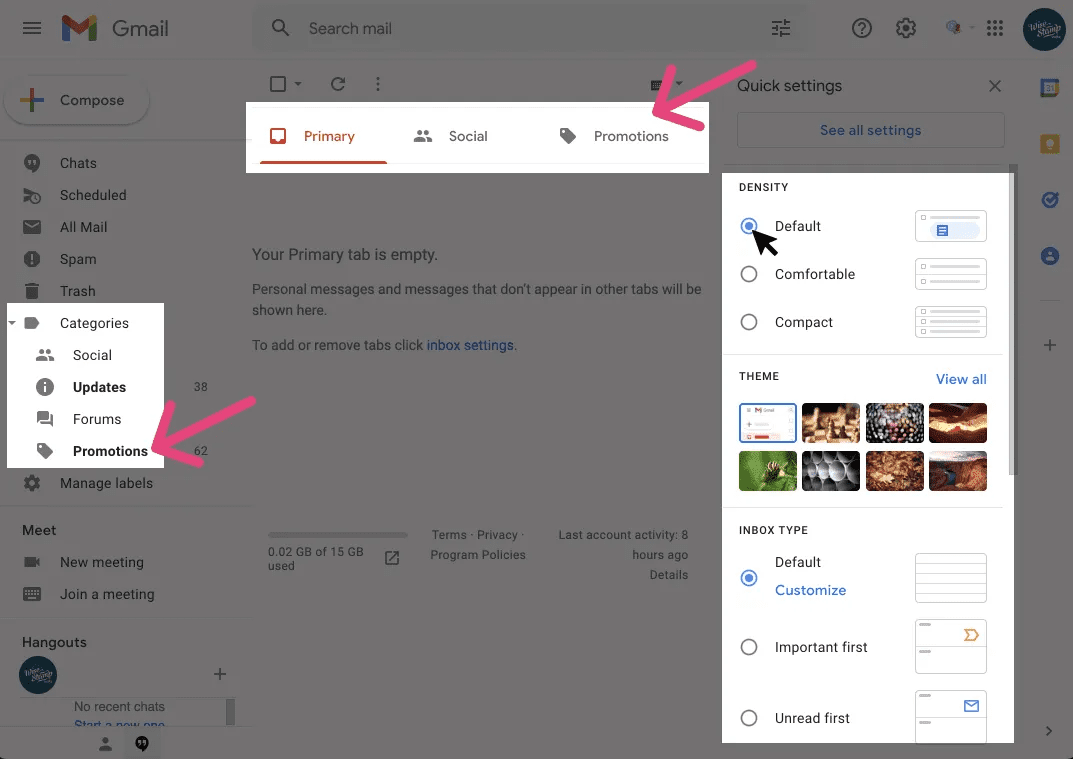

But improving these metrics is only one part of it. A/B testing also enhances the technical side of email marketing. If you don't test email deliverability, you risk your messages not hitting recipients' mailboxes at all and hurting campaign metrics. You also risk landing in a spam folder or promotional tab. 👎

Does the email look just as good on mobile as on desktop? If the readability is poor, your email deletion and unsubscribes may increase.

These are all things your email marketing can stand to gain (or lose) by neglecting to A/B test.

Make data-driven decisions

A/B testing opens the box, giving marketers visibility into what works.

Data drives smarter decisions: you act on what works and don’t act on what doesn’t.

By testing different variables and analyzing the results, marketers see where to improve and can focus on how to optimize that part of their email marketing campaign. Data eliminates guesswork and focuses marketing dollars on the most effective tactics.

8 parts to A/B testing email campaigns

Email A/B testing is doable. In a nutshell, here are eight things to test:

- Subject line: Testing different subject lines can help marketers determine which one resonates best with their audience and drives the highest open rates.

- Email content: Testing different versions of email content, such as the tone, language, and length, can help marketers determine which approach is most effective at engaging their audience.

- Sender name: Testing different sender names, such as a personal name versus a company name, can help marketers determine which approach is most effective at building trust and credibility with their audience

- Call-to-actions (CTA): Testing different CTA buttons, such as the color, size, and text, can help marketers determine which approach is most effective at driving clicks.

- Email images: Testing different images, such as the type, size, and placement, can help marketers determine which approach is most effective at engaging their audience.

- Email layout: Testing different email layouts, such as the design and structure, can help marketers determine which approach is most effective at guiding the reader’s attention and driving conversions.

- Send time: Testing different send times, such as the day of the week and time of day, can help marketers determine which approach is most effective at reaching their audience when they are most engaged.

- Personalization: Testing different levels of personalization, such as using the recipient’s name or tailoring the content based on their interests or past actions, can help marketers determine which approach is most effective at building a connection with their audience.

1. Subject line

Ahh, email subject lines.

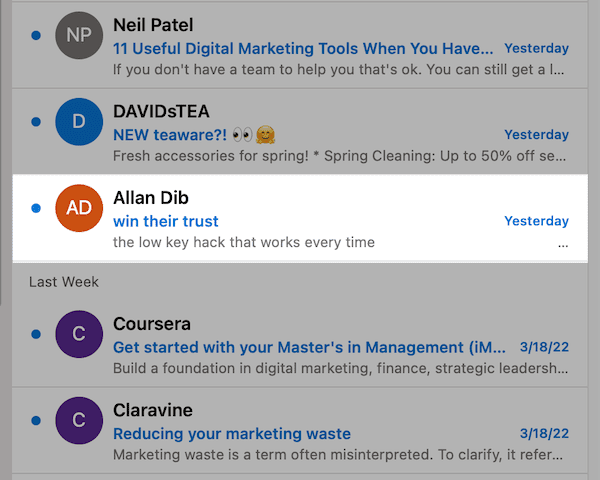

It's the first thing people see and the biggest factor that decides whether or not they open. Your inbox is a feed. If your subject line doesn’t stop the scroll, odds are it will be missed, deleted—or worse—being marked as spam.

Subject lines anchor every email marketing strategy. They are the primary variable to A/B test in every email and for good reason. Optimizing your subject line significantly improves open rates.

And once they peek inside, the odds of everything else go up.

Get this right, and you win clicks from your target audience without pouring over every other excruciating detail.

But what exactly do you test?

- Different subject line lengths (optimal being 4–7 words)

- Personalization (name, company, interests, etc)

- Adding emojis

- Conversational (What do you think?) vs. descriptive (Introducing our new LED mask)

2. Offers and Calls-to-action (CTAs)

Nothing screams “open me” like an email with a special offer. But don't just add a discount and call it a day. There are various ways to make an offer sound (or look) better, and as a result, drive up click-through rates.

You might test

- Including the special offer in the subject line

- Presenting a discount as a dollar amount or percentage

- The total amount of the discount itself

- Adding a tight timeframe (I.e. 24 hours left)

When testing offers and CTAs, consider marketing's “Rule of 100,” which states products under $100 look better with a percentage discount. If the product's over $100, then dollar amount discounts are more appealing.

In the same vein, you can also test your call-to-action that pushes your offer. For instance, you can

- Test different CTA copy

- Try different placements for the CTA button

- Change the color of the CTA button

- See if a CTA link or button works better

Here's an example from Vitacost, which has not one, but two call-to-action buttons in different areas of the email:

3. Design and format

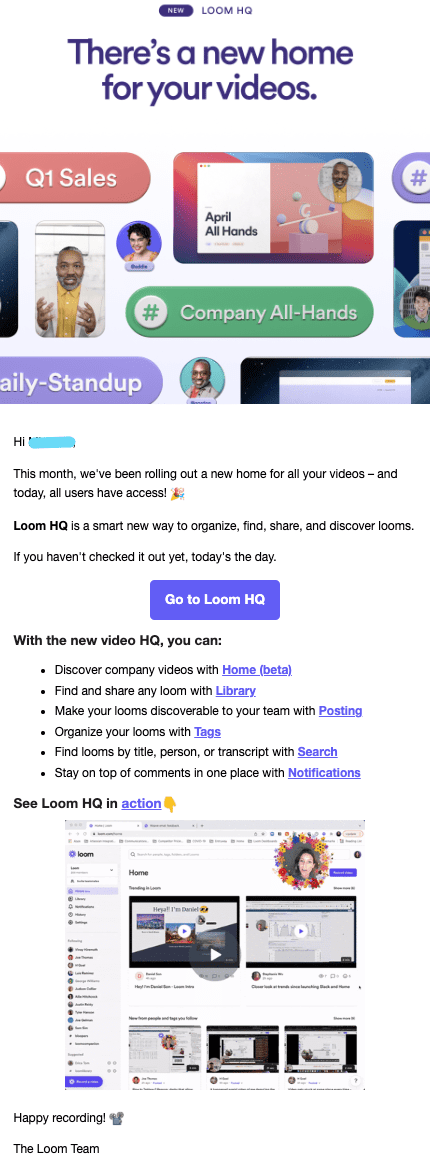

Plain-text vs. HTML? Images or no images? It's not your decision to make. At least, not until the people have spoken.

It's another reason to A/B test emails to see what works. Because—trust us—these things will impact your email marketing success.

Some emails (like newsletters) perform better with text and simple visuals sprinkled throughout. Others (like promotional emails) fare better with an interactive HTML email design.

Here's an example from Loom, which formats their email using a mix of plain-text and HTML design elements:

ClickUp, on the other hand, goes all out with HTML designs and even includes GIFs.

Once you determine what works and resonates with your audience, you can build email templates based on your findings to speed up the process.

4. Email length

What's going to work better for you? Short emails that are sweet and to the point? Or longer emails with in-depth details, complete with a FAQ section?

Test your email's length to identify the perfect sweet spot. Again, the length will depend on the type of marketing email you send and the goals you set.

A newsletter is going to require some more real estate, while a flash sale promo email might only need a single headline.

Here's a great example from Wayfair:

Just one sentence and attractive imagery with a prominent CTA button front and center. (This typically works well in eCommerce email marketing because consumers are quicker to buy or at least window-shop.)

5. Time of day and frequency

The time of day you send emails matters because it can determine whether they're opened or overlooked. Some folks are early birds and like to start the day in their inbox. Others prefer to wait until late in the morning or early afternoon to read messages.

So, what’s the best time to send your email?

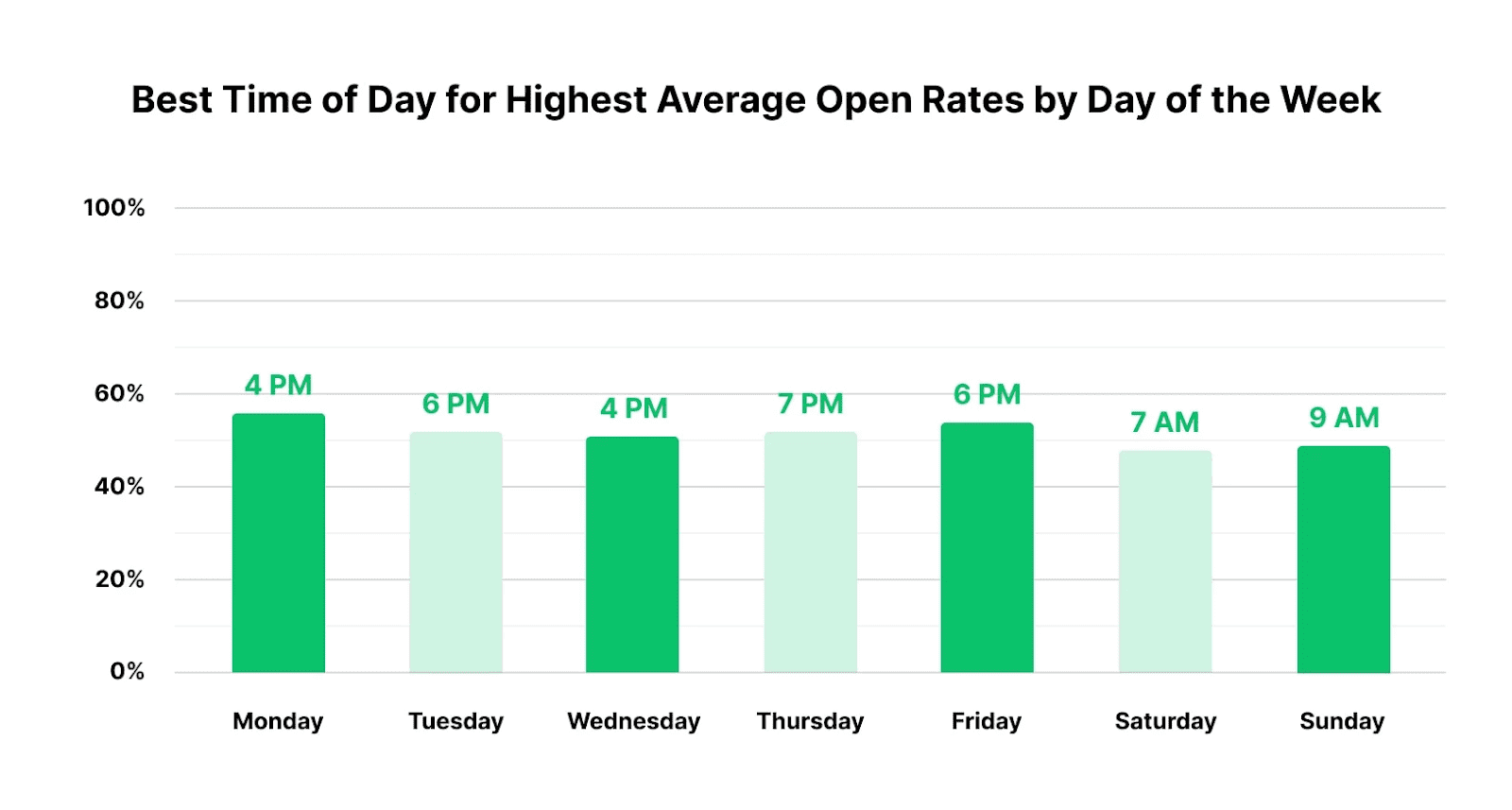

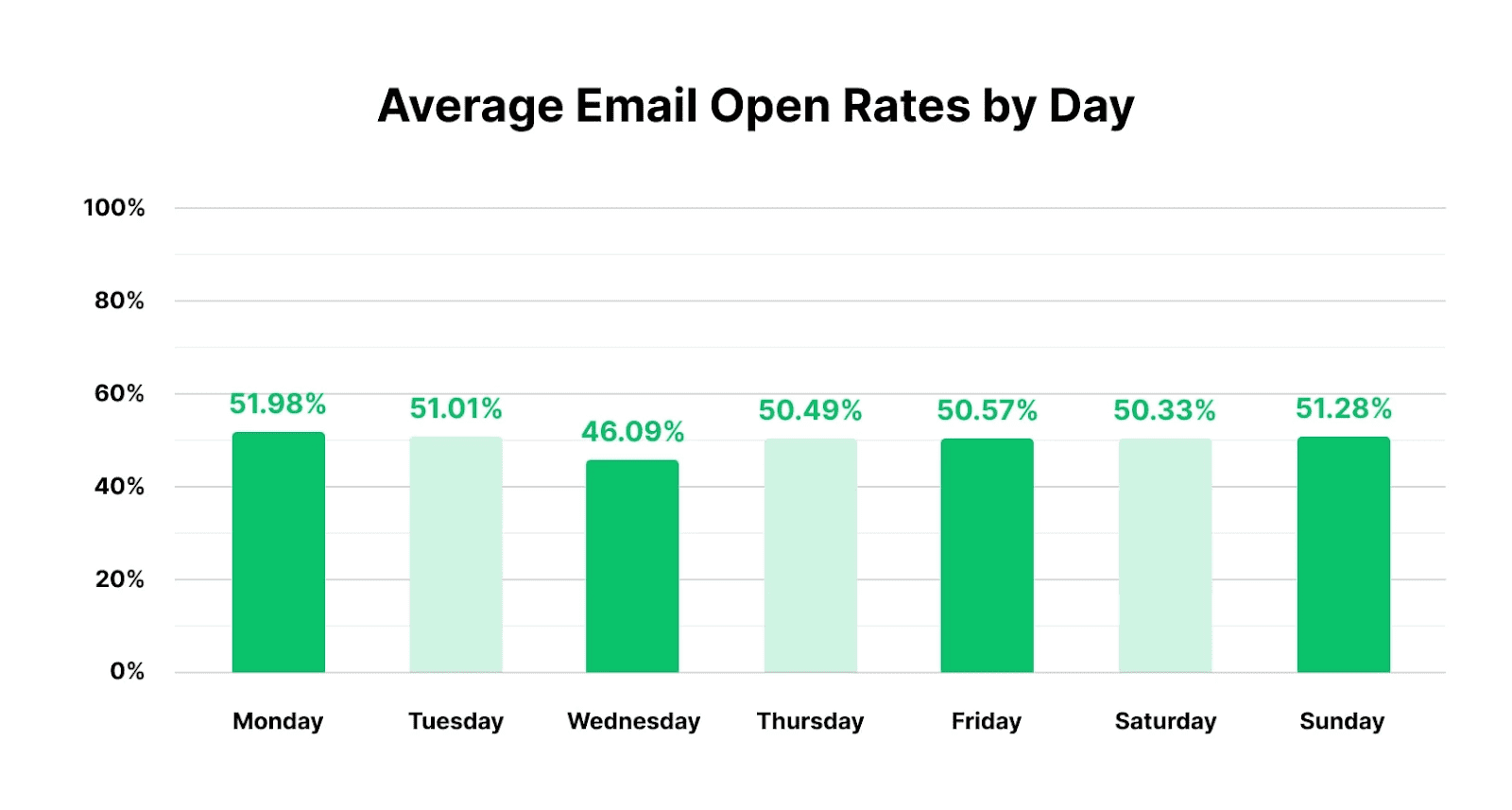

MailerLite did a recent report and found that the best time to send emails varies each day of the week:

The same report also found that open rates were fairly consistent throughout the week.

Of course, you should test every time and day of the week to find what works best for your audience. You might find that your audience prefers going through promotional and branded emails at night or over the weekend.

To add, you also want to test how frequently you send your marketing emails.

Every day might be packing it on a bit too heavy and will have your list running for the hills, while once a month will have your audience scratching their head to remember who you are.

Find the frequency that works best for both you and your audience. Then stay consistent with it.

6. Personalization

There's a lot to test here, and we recommend you don't skimp on this. For starters, 80% of consumers are more likely to purchase from brands that provide a personalized experience.

This extends to your marketing emails.

Personalization can come in many forms, including

- Using customer data to recommend products just for them (it's said that a whopping 91% of consumers are more likely to buy from brands that remember and recommend relevant offers)

- Using the subscriber's name in the subject line

- Sending birthday or anniversary offers

- Sending emails based on actions (I.e. abandoned cart)

- Localizing your emails by making references to local events, stores, and more

- Loyalty-based messaging like acknowledging VIP customers with exclusive perks or early access to sales

- And so much more

Take this Credit Karma example below, where they personalize their email by calling out the recipient's credit score.

This works if you have user accounts to gather information from (e.g., search or purchase history data). Test to see if personalizing works or if your audience cares more about the offer than what you know about them.

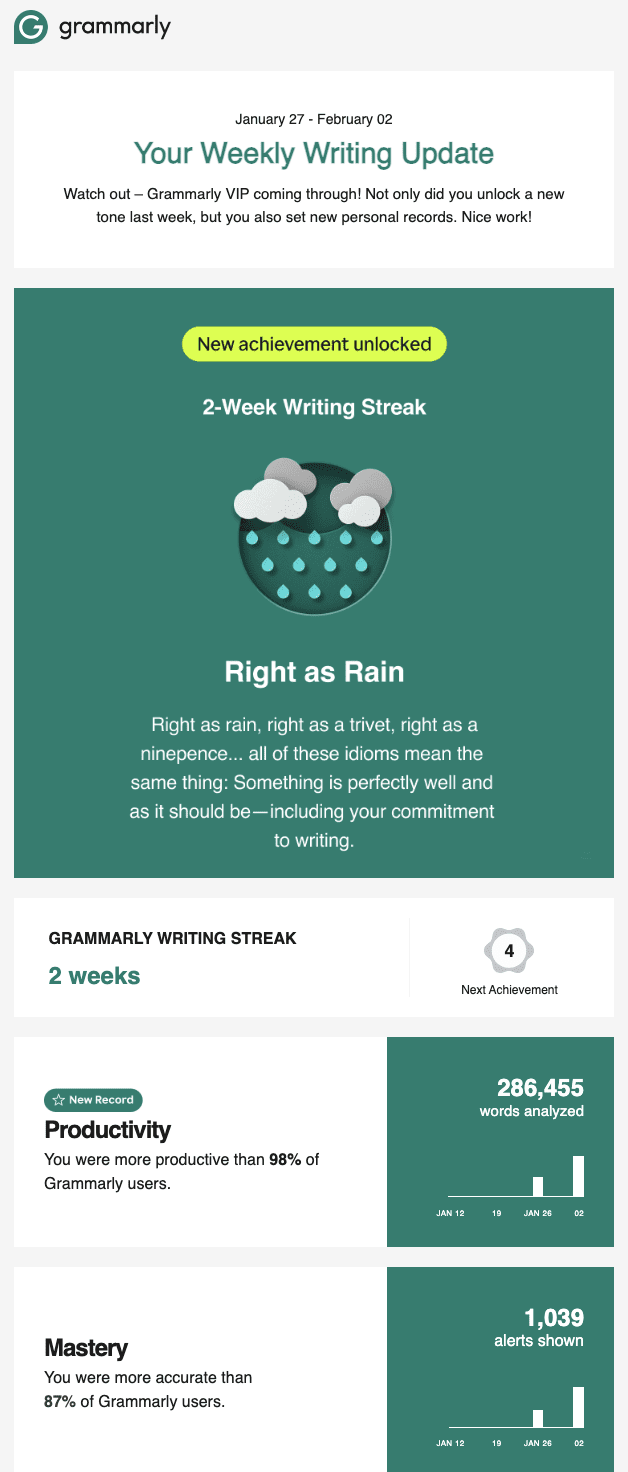

Another great example of personalization is from Grammarly. They personalize a weekly email to let customers know how their writing habits compare to other Grammarly users.

7. Social proof

Would adding some social proof boost result in higher open rates?

This is something you could determine in another email A/B test. Try adding social proof to your emails to see if that results in a lift in opens and clicks.

You might include social proof in the subject line or have its own section in the email content. Not only can you test where to put your social proof, but you can also experiment with different types of social proof. For example, you might test the effectiveness of

- Testimonials

- Star ratings

- Linking to your case studies

- Positive press or PR

- Security badges

- Including your client list

Here’s an abandon cart email from Soltech, an indoor light company for plants:

The possibilities are endless with social proof, and the only way to really find out what works for your audience is to test your way there.

8. Preview text

Don't sleep on the power of the preview text. It's the second thing subscribers look at before clicking an email (if the subject line wasn't enough). Use this to reinforce your message and drive home a click.

Here's an example from Wayfair, which promotes a two-day clearance sale in its subject.

Then in the preview text, it follows up by using

- FOMO (fear of missing out)

- A high numerical discount

- Free shipping.

And before it clips off, you see financing is an option—great news for those who like buy-now-pay-later deals.

I'd open this email.

On the other side of the spectrum, you can also be more conversational in your approach. For example, Growclass, a growth marketing course and community are on a first-name basis with most of their audience via their community channels. So, they don’t need all of the bells and whistles in their emails.

They can just be conversational:

10 best practices for split-testing emails

Split-testing emails isn’t random.

Don’t willynilly pick areas of an email to change, test it for a day, and bolt down the winning version as doctrine. Be methodical so you don’t waste time and money on fruitless efforts.

Here’s a list of best practices to follow when planning and executing your email A/B tests:

- Create a hypothesis: Don't randomly select a component to test in your emails. Hypothesize why you think this area can improve results for the goal you want to achieve (I.e. increase open rates vs. increase click-through rates)

- Focus on high-impact, low-effort variables: Don't waste time on variants that don't impact KPIs. Instead, focus on areas like subject lines, CTAs, offers, and other changes that provoke action.

- Get the timing right: Avoid sending test emails during seasonal changes that can taint your results—for instance, if everyone's on spring break, email opens will be unusually lower.

- Test one variable at a time: Focus on one component to change in each test to know exactly what's improving your results.

- Wait a few weeks for the final results: Check A/B test results a couple weeks after the email campaign to allow for statistical significance. Your data after waiting one day will be different than waiting two weeks.

- Analyze and test again: Look at the results, analyze what you see and why, then run more tests to ensure accuracy.

- Run a test before you start: Yep, test your test. Just like you would with any email, send yourself a test email to ensure there are no errors in the copy, design, or deliverability.

- Determine the test sample size: Determine an adequate portion sizes to ensure test groups are substantial enough to get statistically significant results.

- Keep a control version: Always have a control version that doesn't change to test the variations against (e.g., 60% receive the control version, 20% get Version A, and 20% get Version B).

- Use email automation: This will prevent you from forgetting to send emails and which segments to send them to so you don't flub your test results (e.g., email automation providers like Mailchimp).

Improve email performance with A/B testing

Email marketing has great potential to increase your business's conversion rates and revenue. But only if you know how to trigger your audience to act.

Since there's no way to read minds or guess your way to success, you A/B test emails to achieve those results.

Use this guide to start split-testing email marketing campaigns like a pro.

Then check out this list of 50 email marketing examples for inspiration.

FAQ

What’s a good sample size for A/B testing?

When A/B testing email campaigns, it’s essential to ensure that the sample size is large enough to produce statistically significant results. If a sample size is too small, it can lead to inaccurate results and make it difficult to determine which version of the email campaign is performing better.

HubSpot recommends an email list of at least 1,000 contacts to ensure you can achieve statistically significant results.

Is split testing the same as AB testing?

Yes, split testing is essentially the same as A/B testing! Both terms compare two (or more) variations of something, like a webpage, email, or ad, to see which performs better.

In A/B testing, you typically test two versions (A and B), while split testing often implies a similar concept but can sometimes be used for testing more than two variations or even splitting traffic into different groups more evenly. But in practice, people often use "split testing" interchangeably with "A/B testing" when discussing testing two versions of something.

With either, you analyze the results, optimize your email campaigns, improve click-through rates, and fine-tune your overall email marketing strategy to achieve higher engagement.

How does subject line optimization improve my email campaigns?

Testing email subject lines helps you see what wording, tone, or format resonates most with your audience, leading to higher open rates and better click-through rates. Test length, personalization, and tone.

What metrics should I track when A/B testing emails?

Key metrics to track include open rates (how many people open your email), clicks (how many times someone clicked your call-to-action), click-through rates (how many clicks you got compared to how many total clicks you could have gotten), and conversion rates (how many complete your desired action). These metrics show you what parts of you email campaigns are working so you put all email marketing efforts toward the right elements for future email campaigns.